The wake-up call came without warning. The NGINX Ingress Controller, a cornerstone of countless Kubernetes deployments, was officially retired. For teams running production workloads, this wasn’t just another deprecation notice. It was time to evolve.

Here’s how we successfully migrated NGINX Ingress to the next-generation Kubernetes Gateway API, and why it transformed our entire approach to traffic management.

Why We Couldn’t Ignore This Migration

Migrations are painful. They consume time, introduce risk, and rarely spark joy. So why did we embrace this one?

Three compelling reasons:

- NGINX Ingress retirement meant no more security patches or feature updates

- Gateway API represents the future of Kubernetes traffic management with official support and a unified standard

- Modern gateway implementations unlock enterprise-grade features we were already planning to adopt

This wasn’t just about replacing deprecated technology. It was an opportunity to modernize our entire traffic management strategy.

The Old World: NGINX Ingress Controller

Our setup was straightforward. NGINX Ingress handled all external traffic routing with simple Ingress resources scattered across namespaces. It worked reliably, but it had limitations that became increasingly apparent as our infrastructure grew.

The pain points we lived with included basic traffic splitting, limited protocol support beyond HTTP/HTTPS, configuration sprawl across annotations, and no native service mesh integration.

The New Paradigm: Kubernetes Gateway API

The Kubernetes Gateway API is a collection of API resources for service networking in Kubernetes, designed as a more expressive and extensible successor to Ingress. It consists of GatewayClass, Gateway and various Route types (HTTPRoute, TLSRoute, TCPRoute, etc.) that map requests to Services. It improves on Ingress with better role separation, advanced routing features like traffic splitting and header-based routing, cross-namespace support, and portability across implementations

Choosing Your Gateway Implementation

One of Gateway controllers strengths is choice. You’re not locked into a single implementation. We evaluated several options before making our decision:

Istio offers full service mesh capabilities with advanced traffic management, mutual TLS by default, and rich observability features. It’s powerful but comes with operational complexity.

Envoy Gateway provides a lightweight, focused gateway built on the proven Envoy proxy. It offers excellent performance and is simpler to operate than a full service mesh.

Traefik Proxy is a modern, cloud-native reverse proxy and load balancer that supports the Kubernetes Gateway API along with automatic service discovery, dynamic configuration, and built-in support for multiple protocols and backends

Kong Gateway combines API gateway features with ingress capabilities, perfect for teams already using Kong or needing API management features.

NGINX Gateway Fabric is an open-source implementation of the Kubernetes Gateway API built on NGINX, providing a lightweight and performant ingress solution with native Gateway API support

We ultimately chose a gateway controller as Istio that balanced our needs for advanced traffic management with operational simplicity.

Our Migration Journey

Phase 1: Foundation

We started by installing Istio on our kubeadm cluster and enabling Gateway API CRDs. The process was surprisingly streamlined compared to earlier generations of these tools.

1. Installing Istio by Helm chart (Link)

2.Install the Kubernetes Gateway API CRDs (Link). This way Kubernetes understands the Gateway API custom objects and Istio can watch for those Gateway API objects and act on them

Phase 2: Understanding the Model

The Gateway API’s separation took a moment to appreciate. Instead of monolithic Ingress resources mixing infrastructure and routing, we now had distinct layers. The Gateway defined infrastructure load balancers, ports, protocols. HTTPRoutes defines routing rule hosts, paths, and services.

Phase 3: Migration Strategy

We started with non-critical development services, ran both systems in parallel for selected workloads,

gradually migrated development environments, and finally cut over production services one namespace at a time. At each step, we validated traffic flow, performance, and monitoring.

Phase 4: Route Transformation

Converting NGINX Ingress resources to HTTPRoutes was surprisingly straightforward. Each HTTPRoute referenced our central Gateway, specified hostnames, and defined routing rules with clear match conditions.

Sample Routing rule with Gateway creation

| — apiVersion: gateway.networking.k8s.io/v1 kind: HTTPRoute metadata: name: route namespace: app1 spec: parentRefs: – name: gateway namespace: app1 hostnames: – test.com rules: – matches: – path: type: PathPrefix value: “/” backendRefs: – name: app-service port: 5000 — apiVersion: gateway.networking.k8s.io/v1 kind: Gateway metadata: name: gateway namespace: app1 spec: gatewayClassName: istio listeners: – name: https protocol: HTTPS port: 443 hostname: example.com allowedRoutes: namespaces: from: All – name: http port: 80 protocol: HTTP allowedRoutes: namespaces: from: All — apiVersion: v1 kind: Service metadata: name: gateway-istio namespace: app1 spec: type: NodePort selector: gateway.networking.k8s.io/gateway-name: gateway ports: – name: status-port port: 15021 targetPort: 15021 protocol: TCP nodePort: 31394 – name: http port: 80 targetPort: 80 protocol: TCP nodePort: 32608 – name: https port: 443 targetPort: 443 protocol: TCP nodePort: 32444 |

Our Migration Blueprint

Istio has historically offered its own Gateway and VirtualService APIs for this purpose; it now supports the newer, more standardized Kubernetes Gateway API. This API is slated to become the default for managing ingress and egress traffic in Istio clusters.

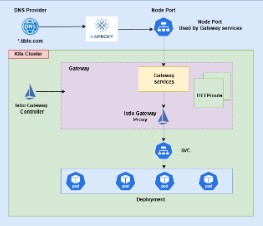

In my implementation, I have specifically configured a Gateway resource using istio as the gateway class, and I defined the routing rules for incoming traffic using only the HTTPRoute resource.

The image below shows the high-level architecture of what we are going to build.

CONCLUSION

Migrating from NGINX Ingress to the Kubernetes Gateway API with Istio represents a strategic evolution in traffic management, not just a technical upgrade. The Gateway API’s standardized approach delivers enhanced routing flexibility, better role separation, and advanced features like traffic splitting and header-based routing. While the migration required careful planning through phased implementation, the long-term benefits of adopting this modern standard far outweigh the initial effort. As the Gateway API becomes the default for Kubernetes traffic management, early adoption positions teams to leverage future innovations in cloud-native networking.